Mock interview feedback is only useful when it changes the next practice session. A vague note like "be more confident" may feel true, but it does not tell you what to do. A useful note says your answer started with too much background, your ownership was unclear, your result arrived too late, or your follow-up answer avoided the tradeoff.

Most candidates get too little feedback, too late. They finish a real interview and hear nothing for days. If they are rejected, the reason may be generic. If they advance, they still may not know which answer worked. Mock interview feedback solves a different problem: it gives you evidence while you still have time to improve.

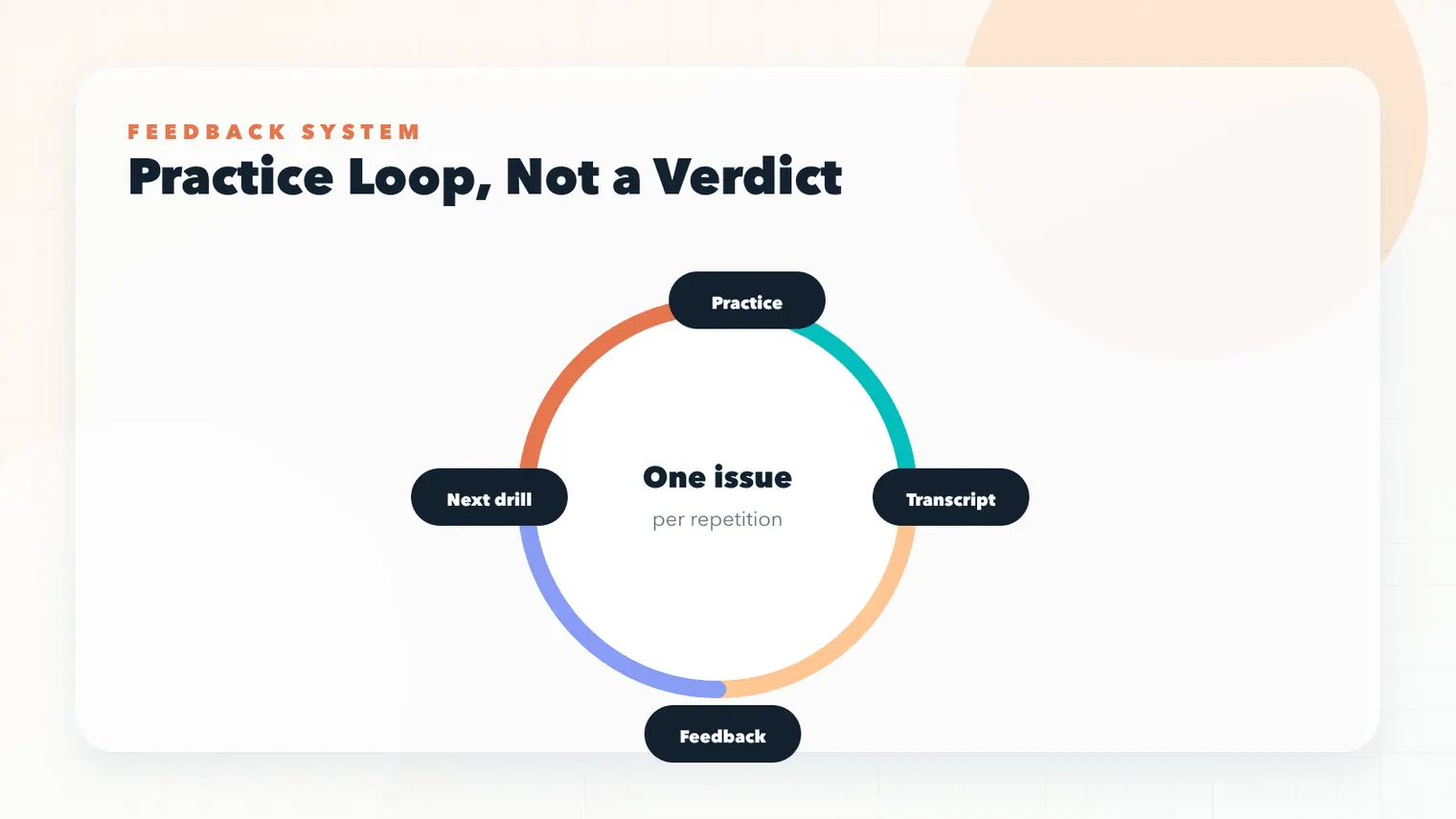

MockGPT is designed around that loop. A realistic practice session should produce a transcript, replay moments, and feedback that connects directly to the next repetition. This guide explains what good mock interview feedback looks like, how to separate useful signal from noise, and how to turn notes into a stronger interview routine.

Good mock interview feedback should change the next practice session. If a note cannot be tied to transcript evidence or turned into one drill, it is probably too vague to act on.

What good mock interview feedback should include

Good mock interview feedback is specific, observable, and tied to the role. It should not judge your personality. It should point to a behavior. "You sounded uncertain" is less useful than "your answer used three qualifiers before stating the decision." "Great story" is less useful than "the result was clear, but the tradeoff was missing."

Tufts Career Center frames interviewing as a skill that improves with practice. That is the baseline. Feedback improves the baseline by showing which part of the response needs revision.

A useful feedback report should separate at least five dimensions: clarity, relevance, structure, evidence, and follow-up resilience. Delivery matters too, especially for video interviews, but delivery feedback should not replace answer feedback. A confident vague answer is still vague.

Feedback dimensions

Turn a vague score into concrete revision| Dimension | Question | Weak signal | Revision move |

|---|---|---|---|

| Clarity | Was the answer easy to follow? | Long setup before action. | Open with the answer, then context. |

| Relevance | Did it match the role? | Interesting story, weak job connection. | Name the role skill it proves. |

| Evidence | Was there proof? | No metric, decision, or outcome. | Add a result or observable change. |

| Follow-up | Did it survive probing? | Repeated the first answer. | Answer the missing signal directly. |

- ClarityOpen with the answer before adding context.

- RelevanceTie the story back to the role signal.

- EvidenceAdd outcome, metric, decision, or change.

- Follow-upAnswer the missing signal instead of repeating.

Mock interview feedback should be tied to the transcript

Feedback without evidence can feel arbitrary. That is why the transcript matters. If a note says your ownership was unclear, the transcript should show the exact sentence where your role disappeared. If a note says the result was buried, the transcript should show where the result finally appeared. This makes the feedback actionable instead of personal.

Northwestern Career Advancement recommends reflecting after interviews and what you learned about the job. For practice, reflection becomes stronger when it is tied to a written record. You can also use transcript-based interview feedback as the entry point for transcript-based review.

In MockGPT, a good feedback moment should point back to what was said. "Your answer becomes vague here" is better than "be more specific." The next practice step should be obvious: rewrite one sentence, add one metric, or prepare one follow-up.

Separate content feedback from delivery feedback

Delivery feedback is tempting because it is visible. You can notice eye contact, pace, posture, and filler words quickly. Content feedback is often more important. Did the answer prove the role requirement? Did the story show judgment? Did the candidate explain the decision? Did the result matter?

Harvard Catalyst's interview guidance emphasizes behavioral questions, communication strategies, listening, and natural pauses. That balance is useful: communication is not only smoothness; it is also relevance and responsiveness.

If you receive mock interview feedback that focuses only on delivery, translate it into answer behavior. "You seemed nervous" might mean the answer started too slowly. "You rambled" might mean there was no clear structure. "You sounded unsure" might mean you used qualifiers before naming your decision. Translation turns impression into practice.

Question match, role relevance, evidence, decision logic, and answer structure.

Pace, pauses, tone, camera presence, filler words, and confidence signals.

How well the answer handles missing details, objections, and deeper probes.

Use mock interview feedback to choose the next drill

Feedback should reduce your practice options. If every note creates ten possible fixes, it is too broad. A strong note points to one drill. If clarity is weak, run a 60-second answer drill. If relevance is weak, map the answer back to the job description. If evidence is weak, add a metric or concrete result. If follow-ups are weak, practice two-sentence clarifications.

Tufts Career Center says interviewing is a skill and that practice helps candidates improve. That framing is important because skills improve through targeted repetition, not through general worry.

When you use the mock interview feedback workspace, treat each feedback report as a practice prescription. Do not try to fix clarity, metrics, tone, and follow-ups in one session. Choose the highest-risk issue for the role and run another round.

- Read the feedback once. Do not edit immediately.

- Find the transcript evidence. Locate the sentence behind the note.

- Name the dimension. Clarity, relevance, structure, evidence, delivery, or follow-up.

- Choose one drill. Practice the smallest fix that would improve the next answer.

- Repeat and compare. Check whether the same note appears again.

What bad mock interview feedback looks like

Bad feedback is vague, personality-based, or impossible to act on. "Be better prepared" is not enough. Prepared for what? Role knowledge, story structure, metrics, follow-ups, company research, or delivery? "Show more confidence" is also incomplete. Confidence may come from clearer structure, not from trying to sound louder.

Another bad pattern is feedback that contradicts the role. A candidate for a data analyst role may need more evidence and analysis detail than a candidate for a recruiter screen. A candidate for a customer-facing role may need more explanation of empathy and communication. Interview feedback should always consider the job context.

- The feedback names an observable behavior, not a personality judgment.

- The note points to a transcript moment or replay moment.

- The dimension is clear: clarity, relevance, evidence, structure, delivery, or follow-up.

- The next practice drill is specific and small.

- The role context is considered before deciding what to fix.

- The same issue is tracked across multiple practice sessions.

How feedback connects to a better job search system

The long-term value of interview feedback is pattern recognition. If one answer is weak, fix the answer. If five answers are weak in the same way, fix the system. Maybe your resume claims leadership but your stories do not show decisions. Maybe your job description fit is strong but your examples lack metrics. Maybe your technical stories are good but your recruiter screen sounds unfocused.

NACE's career readiness framework includes sample behaviors such as seeking feedback, identifying areas for growth, and articulating strengths. That mindset fits interview practice well: feedback is not a verdict; it is information for the next attempt.

This is where MockGPT's future product direction matters. The goal is not only to run one practice interview. It is to connect resume, job description, session transcript, feedback report, and next practice plan. You can start from mock interview feedback practice, but the product goal is to make that loop interactive.

Do not react to one awkward answer as if it explains your whole interview style. Look for the issue that repeats across sessions.

Make feedback feel less personal

Candidates often avoid practice because feedback feels uncomfortable. The best way to reduce that discomfort is to make the feedback concrete. A transcript sentence can be edited. A story map can be adjusted. A follow-up can be rehearsed. A vague feeling about being "bad at interviews" cannot be improved because it is too broad.

Good interview feedback gives you distance. It says: this answer has a clarity problem, not you are unclear. This story lacks evidence, not you lack value. This follow-up needs a decision explanation, not you failed. That distinction keeps practice useful.

Turn every emotional reaction into one editable behavior: setup length, ownership, evidence, role relevance, or follow-up precision.

Ask humans for better feedback

Friends, mentors, and career advisors can help, but only if you ask for feedback at the right level. If you ask "How did I do?" you may get encouragement instead of useful signal. Ask a narrower question. "Was my role clear in this story?" "Did the result arrive soon enough?" "Did this answer connect to the job description?" "What follow-up would you ask me?"

Human reviewers are especially useful for credibility. They can tell you whether a story sounds believable, whether your motivation feels generic, and whether your tone matches the role. AI feedback is useful for structured repetition and transcript-linked notes. Human feedback is useful for judgment, nuance, and audience reaction. Use both when you can.

When a reviewer gives vague feedback, translate it. If they say "I wanted more detail," ask which detail: context, action, result, or decision logic. If they say "you sounded nervous," ask whether the issue was pace, volume, filler words, or answer structure. The translation step turns social feedback into practice feedback.

Track feedback across interview rounds

One practice report is helpful. A series of reports is more valuable. If clarity improves but relevance stays weak, your practice should shift toward job-description mapping. If relevance improves but follow-ups stay weak, your next sessions should focus on probing. If delivery improves but evidence stays thin, go back to the story bank and collect stronger examples.

Create a simple feedback log with four columns: date, role, strongest signal, next drill. Do not write a long journal entry. Keep the log scannable. Before the next interview, read only the latest pattern and the next drill. This keeps preparation focused instead of overwhelming.

For multi-company job searches, the log also protects you from overreacting to one interview. A single interviewer may prefer a different style. A repeated pattern across several sessions is more reliable. MockGPT's long-term value should come from spotting those patterns and turning them into a practice plan.

Use feedback to improve answer selection

Sometimes the answer structure is fine, but the story is wrong. No amount of polishing can make a weak example prove the wrong skill. If feedback keeps saying the answer is not relevant, choose a different story. This is common when candidates use a favorite project for too many prompts.

Return to the job description. What skill is the question testing? Which story proves that skill with the least explanation? The best story is often the one with the clearest evidence, not the one with the biggest title or most impressive company name. Relevance beats prestige.

This is why feedback should connect to the story bank. Mark each story with the signals it truly proves. If a story proves leadership and conflict, use it there. If it does not prove analytical thinking, do not force it into an analytics question. Better story selection makes every answer easier.

Name the skill the prompt is testing.

Choose the example that proves it with the least setup.

Drop favorite stories that do not match the role.

Know when feedback is enough

There is a point where more feedback becomes noise. If you ask five tools and three people to critique the same answer, you may get conflicting advice. One person wants more detail. Another wants more brevity. One tool emphasizes filler words. Another emphasizes metrics. At that point, return to the role and choose the feedback that best serves the interview.

Use a simple priority rule: fix the issue that most directly affects the hiring signal. For a recruiter screen, that may be motivation and clarity. For a technical hiring manager, it may be decision logic and evidence. For a final round, it may be judgment and reflection. Feedback is not equally important in every context.

The purpose of practice is not to satisfy every possible reviewer. It is to help the interviewer understand why your experience matches the job. When feedback serves that purpose, use it. When it distracts from that purpose, set it aside.

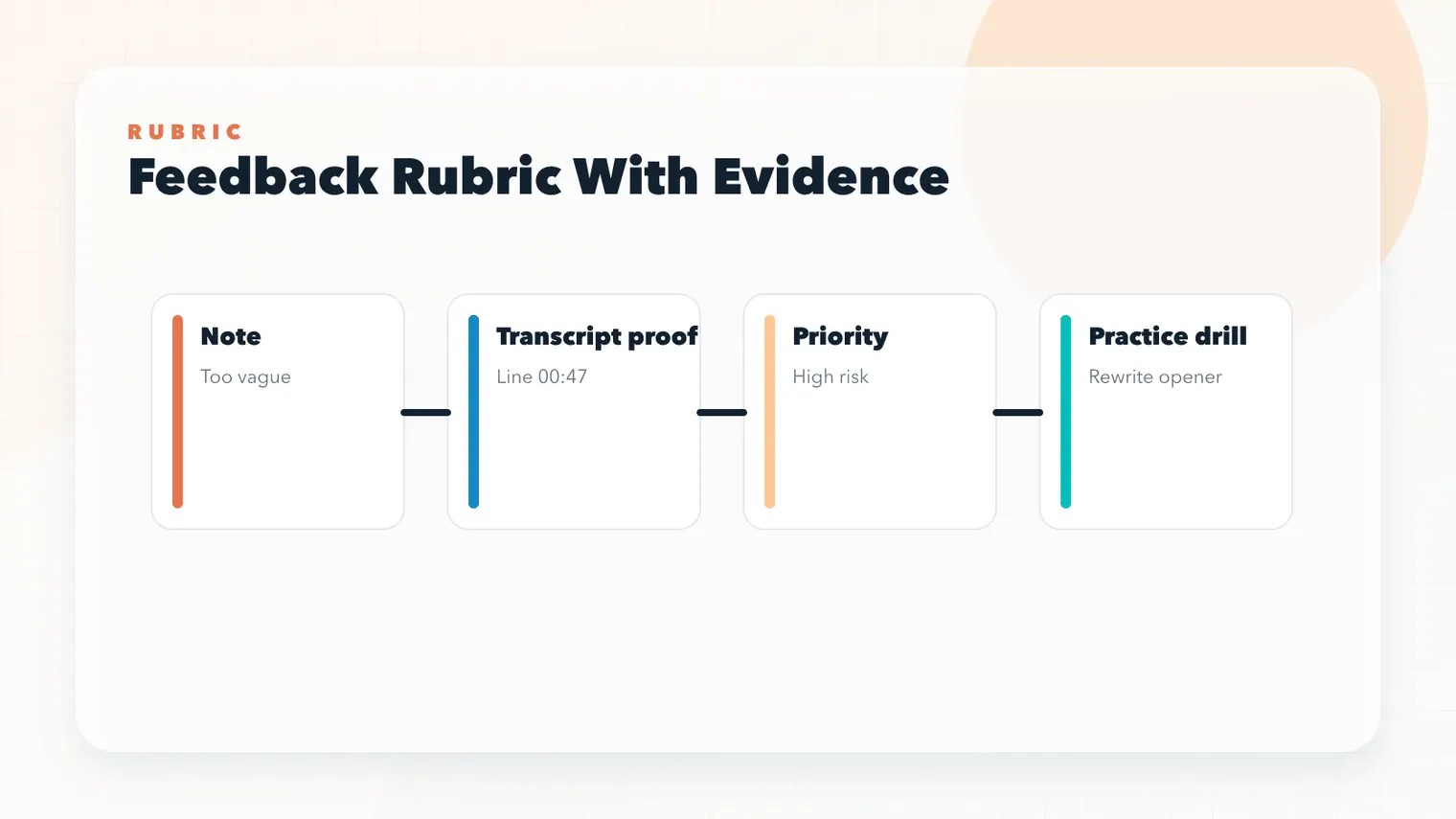

Build a feedback rubric before practicing

A rubric keeps feedback consistent. Without one, every practice session may feel different because you focus on whatever was most noticeable that day. A simple rubric can include five questions: Did I answer the question directly? Did I prove the role signal? Did I show my personal action? Did I include a result? Did I handle the follow-up?

Score each question lightly: strong, usable, weak. Do not over-engineer the score. The labels are there to help you choose the next drill. If "usable" appears repeatedly for evidence, you may need stronger metrics. If "weak" appears for follow-ups, you need probing practice. If everything is usable but nothing is strong, choose the dimension most important for the target role.

The rubric also helps when asking humans for feedback. Give them the rubric and ask them to mark only one or two dimensions. This prevents the conversation from becoming a general critique and keeps the advice tied to interview performance.

Review feedback with a delay

Immediately after practice, feedback can feel sharper than it is. You may overreact to one awkward answer or ignore a useful note because you feel defensive. If the session was intense, read the feedback once, then return to it later. A short delay helps you separate emotion from signal.

When you return, ask three questions. Is the note supported by transcript or replay evidence? Is the note relevant to the target role? Can I turn it into one drill? If the answer is yes, use it. If the answer is no, archive it without making it the focus of your next session.

This habit keeps feedback from becoming a confidence drain. The point is not to collect criticism. The point is to choose better practice.

Connect feedback to your next real interview

Practice feedback should not stay inside the practice environment. Before a real interview, translate your latest notes into three reminders. For example: answer the question first, name my ownership, and give the result before the lesson. Put those reminders near your story bank. Do not bring a long feedback report into the interview mentally.

After the real interview, compare what happened. Did the reminders help? Did a new follow-up expose a different gap? Did the interviewer respond well to a revised story? This comparison turns real interviews into learning without making them feel like experiments.

Over several rounds, the feedback loop should become calmer. You know what to inspect. You know how to choose one fix. You know when to stop. That is the difference between feedback as judgment and feedback as a practice system.

Carry three reminders into the interview, not the whole feedback report. The goal is usable focus, not more notes.

Use feedback to protect confidence

Feedback should make practice more specific, not make you smaller. If a note leaves you thinking "I am bad at interviews," it has not been translated yet. Translate it into a behavior. "I am bad at interviews" might become "my setup is too long" or "I need a clearer result sentence." A behavior can be practiced. A global self-judgment cannot.

This matters because confidence is partly built from evidence. When you see that one drill improves one answer, you trust the process more. When you trust the process, practice becomes less emotionally expensive. You are no longer waiting for someone to declare that you are good enough; you are watching specific signals improve.

MockGPT feedback loop should support that style of confidence. It should be direct enough to be useful, but concrete enough that the next step is obvious. The best feedback does not flatter you or scare you. It gives you a handle.

Name the exact answer behavior that changed.

Keep a short record of the drill that worked.

Archive fixed issues so they do not crowd the next session.

Archive what you have already fixed

Do not keep every old weakness in front of you forever. If a repeated issue improves across several sessions, archive it. Keeping fixed problems in your active notes makes preparation feel heavier than it is. Your current practice plan should show the next useful problem, not every problem you have ever had.

Archiving also helps you notice progress. Interview preparation can feel endless because there is always another answer to improve. A short archive says: this used to be weak, and now it is stable enough. That record can be especially helpful before high-stakes rounds when nerves make you forget how much you have already improved.

Keep the archive short. A few dated notes are enough: what the issue was, what drill fixed it, and which answer proved the change. When you can see improvement in plain language, feedback becomes less threatening and more like a normal part of skill development.

Then let the archive stay in the background. It is there when confidence drops, but it should not compete with the current practice plan. The active plan should stay small enough that you can remember it during the next answer.

This is how feedback becomes sustainable. You keep the lesson, reduce the clutter, and enter the next session with one clear behavior to practice instead of a pile of old criticism.

Clear beats comprehensive when the interview is close and your attention needs a single next action you can actually remember in real interviews later.

FAQ: interview feedback

What is the most useful feedback after a practice interview?

The most useful feedback points to a specific answer behavior and gives one next drill, such as shortening setup, adding evidence, or clarifying ownership.

Should I fix every piece of feedback at once?

No. Choose the issue most likely to affect the target role, practice that issue, and compare the next transcript or replay.

How do I know if feedback is too vague?

If you cannot point to a transcript moment or choose a specific drill, the feedback is probably too vague to use directly.